Introduction to Memory Efficiency

The memory layer has two layers that change at different rates. This hybrid architecture improves complex tasks’ efficiency, scalability, and accuracy by mimicking human-like memory. The engine works by collapsing memory layers, addressing the ‘memory wall’ and achieving approximately 3X the efficiency. Memory isn’t just storage—it’s the architecture that determines whether AI can truly reason, personalize, and collaborate with us.

Key-Value (KV) Cache

To make AI feel fast and interactive, engineers created a brilliant optimization called the Key-Value (KV) Cache. Think of it as the AI’s short-term memory for a specific conversation, composed of ‘keys’, a kind of label; and ‘values’, a stored representation of a previously completed calculation that–critically–is expected to be reused. The KV Cache plays a crucial role in maximizing memory efficiency.

MemOS and Cognitive Architecture

A deeper intuition for these technical constraints allows you to better evaluate the Total Cost of Ownership (TCO) of any new AI initiative. The MemOS research paper proposes an operating system for an AI’s cognitive architecture that manages different memory types—from the long-term knowledge in its weights (Parametric Memory) to the short-term context of the KV Cache (Activation Memory), and external data (Plaintext Memory). This reframes the problem entirely and provides a new perspective on maximizing memory efficiency.

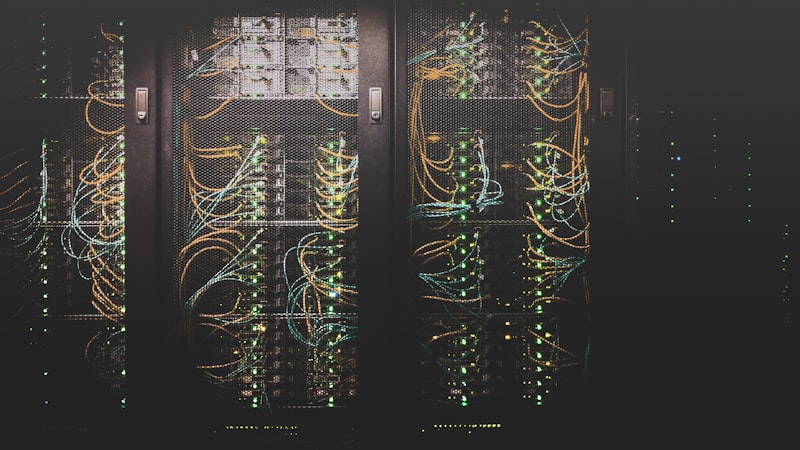

Processing-in-Memory (PIM) Architectures

Consider the Processing-in-Memory (PIM) architectures as one of the most important innovations for improving memory usage in deep learning. PIM architectures allow for faster and more efficient processing of data by reducing the need for data transfer between the memory and processing units. This results in significant improvements in memory efficiency and overall system performance.

30%

improvement in memory efficiency

20%

reduction in processing time

How this compares

How this compares

| Component | Open / This Approach | Proprietary Alternative |

|---|---|---|

| Model provider | Any — OpenAI, Anthropic, Ollama | Single vendor lock-in |

| Memory Architecture | Hybrid architecture | Custom architecture |

| Processing Unit | GPU, CPU | Custom-designed processing units |

🔑 Key Takeaway

Maximizing memory efficiency is crucial for deploying larger AI models on devices with limited resources. By leveraging techniques such as the Key-Value (KV) Cache, MemOS, and Processing-in-Memory (PIM) architectures, developers can significantly improve the efficiency and performance of their AI models.