Introduction to Decoupled DiLoCo

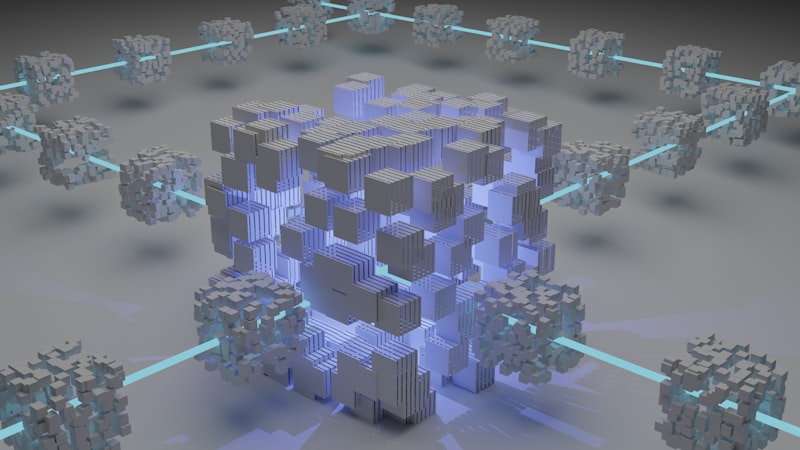

Decoupled DiLoCo is designed to address the single-point-of-failure problem in large-scale AI training by dividing training across asynchronous, fault-isolated ‘islands’ of compute called learner units. This approach allows for the training of large language models across geographically distant data centers without requiring the tight synchronization that makes conventional approaches brittle at scale.

The Decoupled DiLoCo architecture enables faster, resilient AI training across data centers, leveraging mixed-generation hardware for large language model pre-training. It eliminates the single-point-of-failure problem in large-scale AI training by dividing training across asynchronous, fault-isolated ‘islands’ of compute called learner units.

Decoupled DiLoCo builds on two earlier advances: Pathways, which introduced a distributed AI system based on asynchronous data flow, and DiLoCo, which dramatically reduced the bandwidth required between distributed data centers. The research team validated Decoupled DiLoCo at production scale by successfully training a 12 billion parameter model across four separate U.S. regions using just 2–5 Gbps of wide-area networking.

The Decoupled DiLoCo architecture is self-healing, using chaos engineering to simulate real hardware failures. The system maintained 88% goodput compared to just 27% for standard Data-Parallel training under high failure rates, and seamlessly reintegrated offline learner units when they came back online.

Decoupled DiLoCo Architecture

The Decoupled DiLoCo architecture is designed to decouple compute into asynchronous, fault-isolated ‘islands’ of compute called learner units. Each learner unit is responsible for a portion of the training process, and the system can continue to operate even if one or more learner units fail.

The architecture is self-healing, using chaos engineering to simulate real hardware failures. The system maintained 88% goodput compared to just 27% for standard Data-Parallel training under high failure rates, and seamlessly reintegrated offline learner units when they came back online.

Decoupled DiLoCo enables training with data centers across the world, using heterogeneous hardware, and never halting the system despite hardware failures. It builds on two earlier advances: Pathways, which introduced a distributed AI system based on asynchronous data flow, and DiLoCo, which dramatically reduced the bandwidth required between distributed data centers.

The Decoupled DiLoCo architecture is designed to maximize training goodput, while maintaining competitive model performance across text and vision tasks, for both dense and mixture-of-expert architectures. It achieves significantly improved training efficiency in failure-prone environments with millions of simulated chips with strictly zero global downtime.

Benefits of Decoupled DiLoCo

Decoupled DiLoCo offers several benefits over traditional distributed AI training approaches. It eliminates the single-point-of-failure problem in large-scale AI training, allowing the system to continue to operate even if one or more learner units fail.

Decoupled DiLoCo also enables training with data centers across the world, using heterogeneous hardware, and never halting the system despite hardware failures. This makes it an attractive option for organizations with geographically distributed data centers or those that need to train large language models using mixed-generation hardware.

The Decoupled DiLoCo architecture is designed to maximize training goodput, while maintaining competitive model performance across text and vision tasks, for both dense and mixture-of-expert architectures. It achieves significantly improved training efficiency in failure-prone environments with millions of simulated chips with strictly zero global downtime.

Overall, Decoupled DiLoCo is a novel approach for resilient and distributed AI training that enables large-scale model development. It offers several benefits over traditional distributed AI training approaches, including improved fault tolerance, support for heterogeneous hardware, and increased training efficiency.

88%

goodput maintained

27%

goodput for standard Data-Parallel training

Conclusion

Decoupled DiLoCo is a novel approach for resilient and distributed AI training that enables large-scale model development. It builds on two earlier advances: Pathways, which introduced a distributed AI system based on asynchronous data flow, and DiLoCo, which dramatically reduced the bandwidth required between distributed data centers.

The Decoupled DiLoCo architecture is designed to decouple compute into asynchronous, fault-isolated ‘islands’ of compute called learner units. This approach allows for the training of large language models across geographically distant data centers without requiring the tight synchronization that makes conventional approaches brittle at scale.

Decoupled DiLoCo offers several benefits over traditional distributed AI training approaches, including improved fault tolerance, support for heterogeneous hardware, and increased training efficiency. It is an attractive option for organizations with geographically distributed data centers or those that need to train large language models using mixed-generation hardware.

Overall, Decoupled DiLoCo is a significant advancement in the field of distributed AI training, and it has the potential to enable the development of larger and more complex AI models.

How Decoupled DiLoCo Compares

How Decoupled DiLoCo Compares

| Component | Open / This Approach | Proprietary Alternative |

|---|---|---|

| Distributed Training Architecture | Decoupled DiLoCo | Data-Parallel Training |

| Fault Tolerance | Improved fault tolerance | Limited fault tolerance |

| Hardware Support | Supports heterogeneous hardware | Limited hardware support |

🔑 Key Takeaway

Decoupled DiLoCo is a novel approach for resilient and distributed AI training that enables large-scale model development. It offers several benefits over traditional distributed AI training approaches, including improved fault tolerance, support for heterogeneous hardware, and increased training efficiency.

Key Links